Introduction: The Silent Bottleneck of the AI Revolution

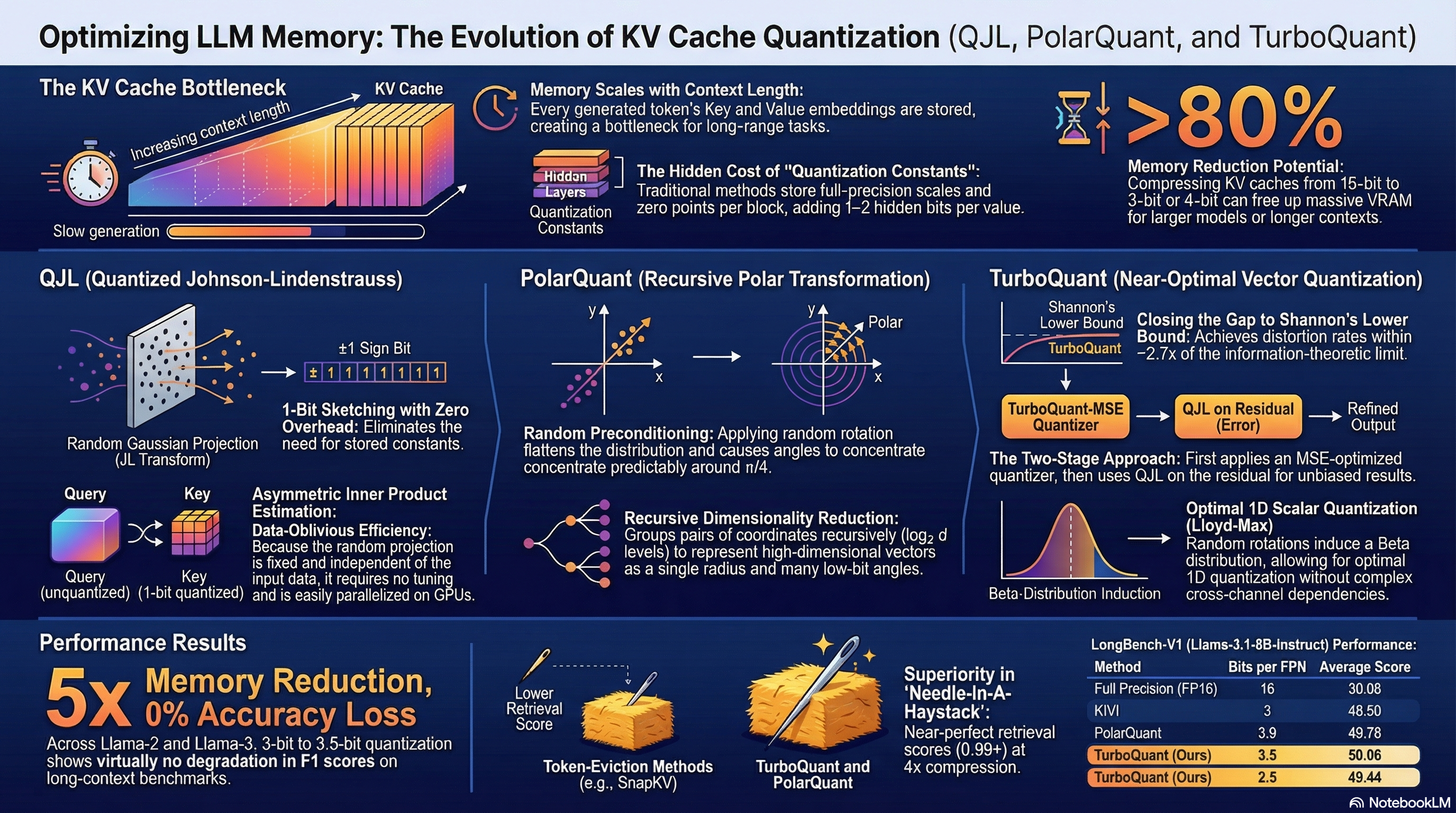

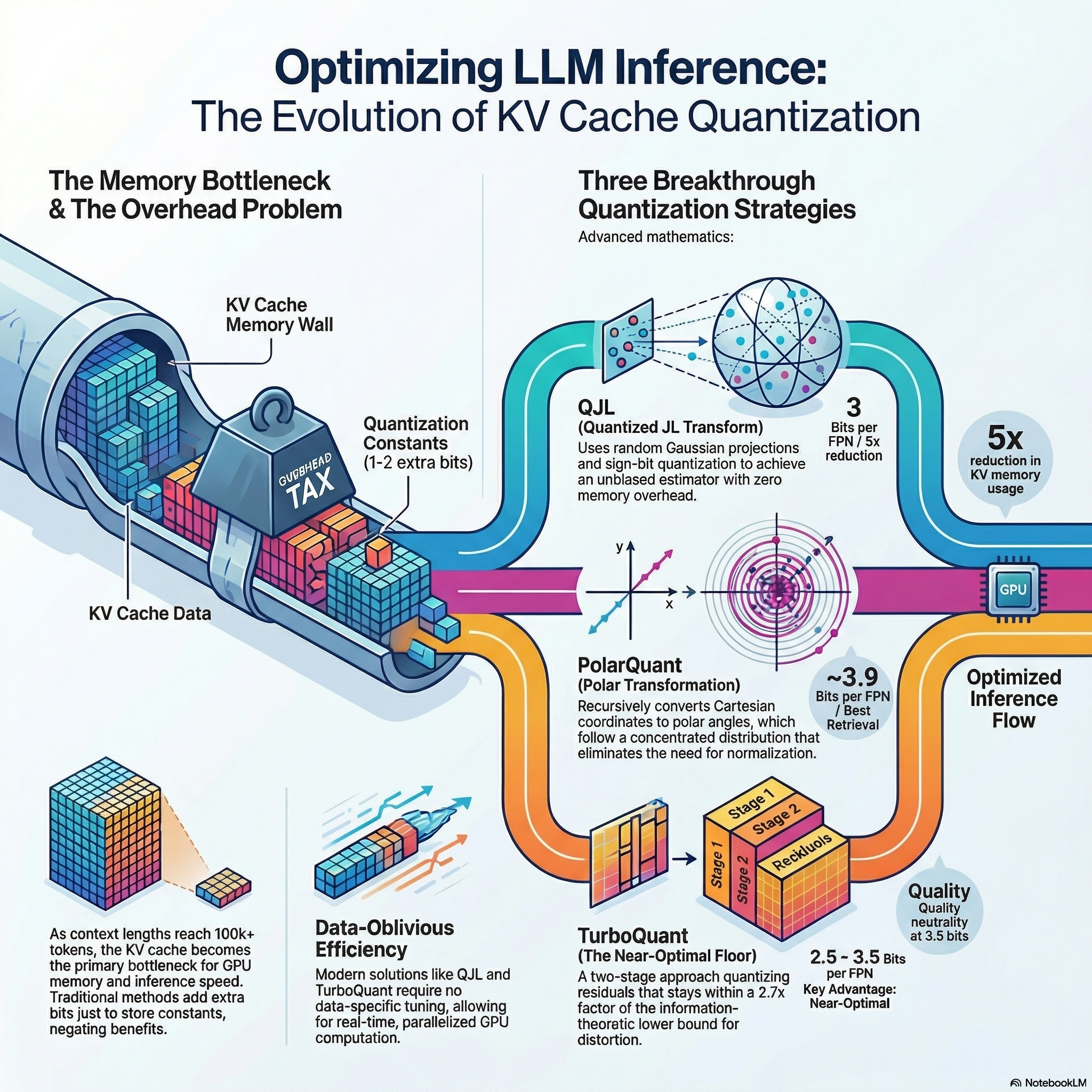

As Large Language Models (LLMs) evolve, their "intelligence" is increasingly defined by the context window—the ability to maintain a coherent "thread of thought" across documents spanning hundreds of thousands of tokens. However, as we scale toward million-token horizons, we have collided with a physical limit: the "Memory Wall."

Contrary to popular belief, GPUs are not running out of memory because of the model weights; they are suffocating under the weight of the KV Cache—the mathematical representations (keys and values) of every previous word stored to avoid redundant computation.

In high-performance inference, the primary bottleneck is the HBM (High Bandwidth Memory) to SRAM transfer. Loading the entire KV cache from the GPU's main memory for every single generated token results in low arithmetic intensity, leaving expensive compute cores idle while they wait for data.

To break this wall, we are moving beyond brute-force hardware toward a new wave of "data-oblivious" mathematical techniques—specifically QJL, PolarQuant, and TurboQuant. These breakthroughs allow us to compress this memory by 5x or more, nearing the theoretical Shannon limits of information theory without sacrificing a single point of accuracy.

The Rotation Paradox: Why Making Data "Messy" Makes it Easier to Compress

One of the most counter-intuitive discoveries in modern systems architecture is that randomly "scrambling" data makes it easier to store. Traditionally, LLM data is difficult to compress because it contains "outliers"—fixed channels in deeper layers with massive magnitudes that break standard quantization scales.

The solution, utilized by both TurboQuant and PolarQuant, is Orthogonal Preconditioning. By applying a random rotation matrix $\Pi$ (generated via QR decomposition) to the embedding vectors, we perform a "mathematical blending" of the data. This transformation ensures that the coordinates of the rotated vectors converge to a highly predictable Gaussian-like distribution, specifically $N(0, 1/d)$ in high dimensions ($d$).

This preconditioning removes the "hidden tax" of traditional quantization. Usually, engineers must store "quantization constants"—full-precision zero points and scaling factors for every small block of data—to manage outliers. Orthogonal preconditioning makes the data so uniform that these constants become unnecessary.

Traditional quantization methods face significant memory overhead due to the need to store quantization constants (at least a zero point and a scale) in full precision per data block. QJL eliminates memory overheads by removing the need for storing quantization constants.

Ditch the Grid: The Case for Polar Thinking

Standard AI memory storage uses Cartesian coordinates—the traditional grid of X and Y values. However, PolarQuant demonstrates that for high-dimensional vectors, we should be thinking in "Angles and Radii."

Using a Recursive Polar Transformation, PolarQuant breaks a $d$-dimensional vector into a hierarchy of dimensions (Level 1, Level 2, up to $\log_2 d$). At each level, dimensions are halved ($d/2, d/4, \dots$), eventually producing a single final radius and a series of angle vectors. This is mathematically elegant because, in high-dimensional AI embeddings, the "direction" (the angle) carries the essential semantic information, while the exact "position" (the radius) is less critical for attention accuracy.

After preconditioning, these angles concentrate predictably around $\pi/4$. By focusing bit-allocation on these concentrated angles, PolarQuant achieves a near-perfect score of 0.995+ on the "Needle-In-A-Haystack" test using Llama-3.1-8B-Instruct. It effectively maintains full recall across context lengths up to 104K tokens, significantly outperforming token-eviction methods like SnapKV or PyramidKV.

The Magic of the Sign Bit: Getting to 1-Bit Accuracy

The most radical compression occurs in the QJL (Quantized Johnson-Lindenstrauss) transform. QJL proves that we can reduce a "Key" embedding in the cache to just a single bit—the sign bit $(sign(S_k))$—and still retrieve the truth.

The secret lies in the "Asymmetric" estimator. If we were to quantize both the Query and the Key to a single bit, the resulting estimator would be biased, requiring a complex cosine correction. However, by keeping the Query in high precision while compressing the Key to 1-bit, the math remains linear and provides an unbiased estimator of the original inner product.

The inner product is estimated as:

$$\langle Q, K \rangle \approx \frac{d}{\pi} \cdot \text{sign}(Q) \cdot \text{sign}(K)$$

This 1-bit approach represents a fivefold reduction in KV cache memory usage. Because the required bits scale only logarithmically with the context length and remain independent of the embedding dimension, we can theoretically run massive, long-context models on consumer-grade hardware that would otherwise be restricted to server-grade HBM capacities.

Reaching the "Shannon Limit": The Near-Optimal Future

We are finally approaching the "Shannon Distortion-Rate" limit—the absolute mathematical speed limit of data compression. TurboQuant represents the pinnacle of this effort, matching the Shannon Lower Bound within a small constant factor of approximately $2.7x$.

TurboQuant's secret is a sophisticated two-stage process designed to eliminate the bias found in standard Mean-Squared Error (MSE) quantizers.

- Stage 1: It applies an MSE-optimal scalar quantizer (using the Lloyd-Max algorithm) to the rotated data.

- Stage 2: It applies a 1-bit QJL transform specifically to the residual error left over from Stage 1.

This hybrid approach ensures "absolute quality neutrality" at 3.5 bits per channel. To reach even higher efficiency, TurboQuant employs a "Prioritized Resource Allocation" strategy for its 2.5-bit mode. For a standard 128-dimension head, it identifies and allocates 3 bits to 32 outlier channels while compressing the remaining 96 inlier channels to just 2 bits. This prioritized allocation ensures that the most semantically dense parts of the AI's memory are preserved with the highest fidelity.

From Megabytes to Kilobytes

The transition from QJL to PolarQuant and TurboQuant marks a fundamental shift in AI systems architecture. We are moving away from the "brute-force" era of simply buying more VRAM toward an era of "mathematically optimized" memory.

By utilizing orthogonal preconditioning, recursive polar coordinates, and asymmetric two-stage estimators, we have effectively shrunk the AI's memory footprint by over 80% without shrinking its cognitive capacity.

If we can now compress an AI's memory by 80% with zero loss in intelligence, what is the true limit of how much context—and history—a single machine can remember?

Research & Resources

- TurboQuant: Redefining AI Efficiency with Extreme Compression — Google Research official blog post introducing TurboQuant techniques.

- TurboQuant Paper (arXiv:2504.19874) — Complete mathematical framework and experimental validation.

- PolarQuant Paper (arXiv:2406.03482) — Polar coordinate transformation for KV cache compression.

- QJL & Asymmetric Quantization (arXiv:2502.02617) — Quantized Johnson-Lindenstrauss transform and single-bit compression theory.